Clinical AI Brief · Issue #1 · February 12, 2026

The AI signal for healthcare leaders who don’t have time for the noise.

Welcome to the first issue of Clinical AI Brief. Each week, we distill the healthcare AI developments that actually matter for hospital and health system leaders — regulatory deadlines, safety signals, implementation lessons, and research worth your time. No vendor press releases repackaged as insight. No breathless hype. Just the signal.

Let’s get to it. There’s a lot this week, and one of your deadlines is in four days.

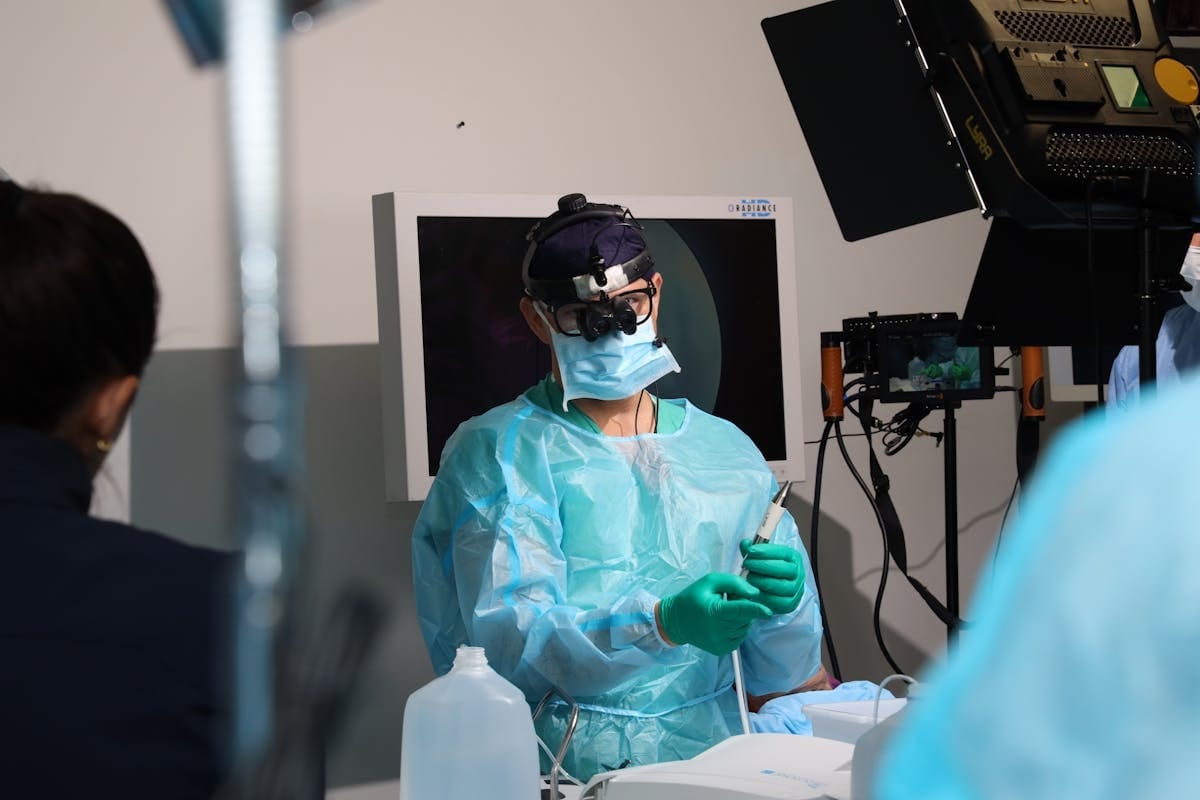

🔴 Lead Story: Reuters Exposes AI Failures in the Operating Room

When AI misidentifies anatomy during surgery, the consequences aren’t theoretical. They’re measured in patient harm.

On February 9, Reuters published a sweeping investigation documenting cases where AI-powered surgical navigation systems gave incorrect guidance to surgeons — including misidentified body parts and botched procedures involving Integra LifeSciences’ TruDi Navigation System. The investigation drew on safety records, lawsuits, and interviews with clinicians, scientists, and regulators. ✅

Integra LifeSciences has stated “there is no credible evidence to show any causal connection between the TruDi Navigation System, AI technology, and any alleged injuries.” But the broader finding is harder to dismiss: device makers and tech companies are racing AI into operating rooms faster than post-market surveillance can track outcomes.

A companion Reuters piece broadens the lens to AI’s rapid, sometimes reckless, entry into clinical practice more generally. ✅

What this means for you: If your health system uses AI-assisted surgical navigation, initiate a risk review now. Ensure clinical engineering and patient safety teams have adverse event reporting workflows that specifically account for AI-related failures. This story will generate board-level questions — better to have answers ready.

⚖️ Regulatory Watch

🚨 HIPAA NPP Deadline: February 16 — Four Days Away

All HIPAA covered entities processing substance use disorder records under 42 CFR Part 2 must update their Notice of Privacy Practices by Feb 16, 2026. The 2024 rule changes aligned Part 2 with HIPAA, requiring updated NPPs explaining how SUD patient information is handled. If you have behavioral health or addiction treatment programs, this is non-negotiable. Fenwick ✅ | Brownstein ✅

HIPAA Security Rule Overhaul — May 2026

HHS is expected to finalize a major Security Rule update that converts “addressable” safeguards to mandatory technical controls. This is the biggest structural change to the Security Rule in years. CISOs should begin gap assessments now, especially for AI systems handling ePHI. Healthcare Law Insights ✅ | Asimily ✅

Utah Lets AI Prescribe — Without FDA Oversight

Utah became the first state to allow AI to autonomously renew prescriptions without clinician input, via startup Doctronic. The company claims this is “the practice of medicine,” not a medical device — potentially sidestepping FDA jurisdiction entirely. STAT News ✅

FDA Clears First AI Lung Cancer Detection + Diagnosis Device

Median Technologies received 510(k) clearance for eyonis® LCS — the first AI-based combined detection and diagnosis device for lung cancer screening on low-dose CT. BusinessWire ✅ | Diagnostic Imaging ✅

🏥 Implementation Spotlight: Mass General Brigham — AI Meets Physician Pushback

Mass General Brigham, Massachusetts’ largest health system, launched AI-assisted telehealth for primary care using K Health’s AI platform to triage and support virtual visits. KFF Health News ✅

The problem isn’t the technology. It’s the rollout. MGB primary care physicians have organized union efforts, citing concerns that AI is being prioritized over adequate staffing. A Boston Business Journal report detailed physician complaints that the system “has ignored their concerns about staffing, AI implementation and a $400 million investment promise.” ✅

The lesson: AI tools cannot substitute for workforce engagement strategy. If your implementation roadmap doesn’t include a clinician engagement plan, you don’t have a roadmap. You have a hope.

🔒 Security & Privacy

Conduent Breach Expands to 25M+ Victims

Conduent Business Services now reports over 25 million individuals affected by its data breach. At least 10 federal class action lawsuits filed, and HHS OCR is investigating. Credit monitoring deadline: March 31, 2026. HIPAA Journal ✅ | AllAboutLawyer ✅

HIPAA + AI: Mind the Compliance Gap

A HIPAA Journal analysis flags critical compliance gaps: AI training data provenance, third-party AI vendor oversight, and BAA requirements. ⚠️ If you’re deploying AI that touches patient data and haven’t updated your BAA templates, that’s your action item this week.

CISOs, take note: The upcoming mandatory Security Rule controls will apply to every AI system handling ePHI. Inventory all AI tools touching patient data now.

📚 Research Roundup

Prima: AI Reads Brain MRIs in Seconds — 97.5% Accuracy

University of Michigan researchers developed Prima, a video language model that interprets brain MRIs in seconds with up to 97.5% accuracy. Published in Nature Biomedical Engineering. ✅ The catch: Single-institution study. Needs external validation. ScienceDaily ✅

LLMs No Better Than Google for Patient Health Decisions

A randomized study in Nature Medicine found AI models identified conditions correctly 94.9% of the time but chose the correct course of action only 56.3% — no better than internet search. ✅ Reuters ✅

PRIMARY-AI: Standards Framework for AI in Primary Care

Also in Nature Medicine, a new outcomes-based standards framework for safeguarding primary care in the AI era. ⚠️

💼 Vendor Pulse

Midstream Health + CommonSpirit Health: a16z-backed Midstream partnered with CommonSpirit for AI-powered financial operations. CommonSpirit is both investor and first customer. GlobeNewswire ✅

Synthpop raises $15M Series A (total $23M) for healthcare “agentic AI” — multi-agent workflow automation. Strategic investor: former Humana CEO Bruce Broussard. BusinessWire ✅

AI Optics Sentinel Camera: FDA 510(k) clearance for handheld AI retinal imaging. Modern Retina ⚠️

📝 The Bottom Line

This week’s news tells one story with uncomfortable clarity: capability is outpacing governance. Reuters documented patients harmed by AI in the OR. Nature Medicine showed consumer AI chatbots aren’t outperforming Google for health decisions. Mass General Brigham is learning that deploying AI without workforce alignment creates as many problems as it solves. Utah is letting AI renew prescriptions without FDA oversight. And your HIPAA compliance calendar just got heavier. The organizations that will navigate this era well aren’t the ones adopting AI fastest — they’re the ones investing equally in the safety frameworks, workforce engagement strategies, and regulatory infrastructure to deploy it responsibly. The question is no longer whether to adopt AI. It’s whether you have the organizational maturity to do it without harming patients, alienating clinicians, or triggering the enforcement action that’s clearly coming.

👀 What to Watch

Feb 16, 2026 — HIPAA NPP update deadline (42 CFR Part 2 alignment). Four days. Move.

Feb 22–25, 2026 — ViVE 2026, Los Angeles. Expect major healthcare AI announcements. We’ll have coverage.

May 2026 (est.) — HIPAA Security Rule finalization. Mandatory controls replacing “addressable” safeguards.

Clinical AI Brief is published weekly. We read the firehose so you get the signal.

Questions, tips, or stories we should track? Reply to this email.

Stay sharp. Stay informed. Stay skeptical.

— The Clinical AI Brief Team

Why Clinical AI Brief Exists

This newsletter was born from a simple frustration — and a deep conviction.

After more than twenty years leading IT in healthcare, I’ve watched technology transform patient care in ways that would have seemed like science fiction when I started. I’ve seen electronic records replace paper charts, telehealth connect rural patients to specialists hundreds of miles away, and analytics tools catch deteriorating patients before clinicians could. Every one of those advances started with someone asking: how do we take better care of people?

AI is the most powerful tool to arrive at that question in a generation. And I believe — with everything I’ve seen over two decades — that it will save lives, reduce suffering, and give clinicians back the time that bureaucracy stole from them. That’s not hype. That’s the trajectory I’ve watched unfold, accelerating.

But here’s what keeps me up at night: the gap between what AI can do and what we’re prepared to govern is growing faster than any technology gap I’ve seen in my career. And in healthcare, gaps don’t show up as bugs or outages. They show up as patient harm. As breaches. As clinicians losing trust in the systems they depend on.

Healthcare IT leaders don’t need more noise. You’re already drowning in vendor pitches, conference keynotes, and breathless LinkedIn posts about how AI will change everything. What you need is someone who understands both the promise and the weight of this moment — someone who has sat in the same chair you’re sitting in, making decisions that affect patient care, and who refuses to let hype outrun honesty.

That’s what Clinical AI Brief is. A weekly signal from inside the trenches. Every claim sourced. Every risk acknowledged. No sponsors influencing what we cover. Just the intelligence you need to lead well in the most consequential technology shift healthcare has ever faced.

Because at the end of the day, this isn’t about algorithms or architectures. It’s about the patient in the bed, the nurse at the bedside, and the leaders making decisions that ripple through both their lives. Getting this right isn’t just a professional obligation. It’s a moral one.

Welcome to Issue #1. I’m glad you’re here.

Know someone who needs the signal? Share Clinical AI Brief — it’s free.